Introduction and Background

I have been reading many posts and articles about JSON and C# where every article tries to clarify the purpose of either one thing, or the other thing: JSON or C# libraries. I want to cover both of these technologies in one post so that if you have no idea of JavaScript Object Notation (JSON), and the C# libraries available for processing and parsing the JSON files you can understand these two concepts fully. I will try my best to explain each and every concept in these two frameworks and I will also try to publish the article on C# Corner and to share an offline copy of this post as an eBook, so excuse me if you find me sliding through different writing formats because I have to stick to both writing styles in order to qualify this post as an article and as a miniature guide.

When I started to do a bit of programming, I knew there were many data-interchange formats, serialization and deserialization techniques and how objects can be stored including their states in the disk to fetch the same data back for further processing and further working on the same objects that you just left while you were going outside. Some of these techniques are tough to be handled and understood while others are pretty much framework-oriented. Then comes JSON into the image, JSON provides you with a very familiar and simple interface for programming the serialization and deserialization of the objects on runtime so that their states can be stored for later use. And we are going to study JSON notation of data-interchange and how we can use this format in C# projects for our applications.

What is JSON?

I remember when I was starting to learn programming there was this something called, Extensible markup language, or as some of us know it as, XML. XML was used to convert the runtime data of the objects or the program states or the configuration settings into a storable string notation. These strings were human-readable. Programmers could even modify these values when they needed to, added more values or removed the values they didn’t want to see in the data.

Introduction of XML

For a really long time and even today, XML is being used as the format for data-interchange. Many protocols for communication are built on the top of XML, most notably SOAP protocol. XML didn’t even power a few of the protocols, it even kick-started many of the most widely known and used markup languages, of which HTML and XAML are the ones that most of you are already familiar with.

Figure 1: XML file icon.

History has it, XML has been of a great use to many programmers for building applications that share the data across multiple machines. The world wide web started with the pages written in a much XML-oriented way, markup language called, HTML.

The purpose of XML entirely was to design the documents in plain-text that can be human-readable and can be used by the machines to define the state of applications, program or interfaces.

- State of applications: You can store the object states, which can be loaded later for further processing of the data. This would allow you to maintain the states of the applications too.

- Program: XML can be used to define the configuration of the programs when they start up. Microsoft has been using web.config and machine.config files to define how a program would start. Programmers and developers can easily modify the files as they needed them to. This would alter the design of the way program starts.

- Interfaces: HTML is being used to define how the user-interface would look like. Windows Presentation Foundation uses XAML as the major language for designing the interfaces. Android supports XML-based markup language for defining the UI controls.

<?xml version="1.0" encoding="UTF-8"?>

Empty XML documents are not meant to contain any blank object or document tree. They can be omitted.

This is not it. XML is much more than this, WCF, for example supports SOAP communication. Which means that you can download and upload the data in XML format. ASP.NET Web API supports data transfer in the form of XML documents.

Usage of XML

I want to show you how XML is used, then why shouldn’t I used something that comes from a real-world example. RSS for example, uses XML-based documents for delivery of feeds to the clients. I am using the same technology feature on my blog to deliver the blog posts to readers and other communities. The basic XML document for a single post would look like this,

<?xml version="1.0" encoding="UTF-8"?>

<rss version="2.0"

xmlns:atom="http://www.w3.org/2005/Atom"

xmlns:media="http://search.yahoo.com/mrss/">

<channel>

<title>Learn the basics of the Web and App Development</title>

<atom:link href="https://basicsofwebdevelopment.wordpress.com/feed/" rel="self" type="application/rss+xml" />

<link>https://basicsofwebdevelopment.wordpress.com</link>

<description>The basics about the Web Standards will be posted on my blog. Love it? Share it! Found an error, ask me to edit the post! :)</description>

<lastBuildDate>Fri, 03 Jun 2016 09:48:29 +0000</lastBuildDate>

<language>en</language>

<generator>http://wordpress.com/</generator>

<cloud domain='basicsofwebdevelopment.wordpress.com' port='80' path='/?rsscloud=notify' registerProcedure='' protocol='http-post' />

<image>

<url>https://s2.wp.com/i/buttonw-com.png</url>

<title>Learn the basics of the Web and App Development</title>

<link>https://basicsofwebdevelopment.wordpress.com</link>

</image>

<atom:link rel="search" type="application/opensearchdescription+xml" href="https://basicsofwebdevelopment.wordpress.com/osd.xml" title="Learn the basics of the Web and App Development" />

<atom:link rel='hub' href='https://basicsofwebdevelopment.wordpress.com/?pushpress=hub'/>

<item>

<title>Hashing passwords in .NET Core with tips</title>

<link>https://basicsofwebdevelopment.wordpress.com/2016/06/03/hashing-passwords-in-net-core-with-tips/</link>

<comments>https://basicsofwebdevelopment.wordpress.com/2016/06/03/hashing-passwords-in-net-core-with-tips/#respond</comments>

<pubDate>Fri, 03 Jun 2016 08:42:49 +0000</pubDate>

<dc:creator><![CDATA[Afzaal Ahmad Zeeshan]]></dc:creator>

<category><![CDATA[Beginners]]></category>

<category><![CDATA[C# (C-Sharp)]]></category>

<guid isPermaLink="false">https://basicsofwebdevelopment.wordpress.com/?p=1313</guid>

<description>

<![CDATA[Previously I had written a few stuff for .NET framework and how to implement basic security concepts on your applications that are working in .NET environment. In this post I want to walk you to implement the same security concepts in your applications that are based on the .NET Core framework. As always there will be […]<img alt="" border="0" src="https://pixel.wp.com/b.gif?host=basicsofwebdevelopment.wordpress.com&blog=59372306&post=1313&subd=basicsofwebdevelopment&ref=&feed=1" width="1" height="1" />]]>

</description>

</item>

</channel>

</rss>

Of course I snipped out most of the part, but you get the point of XML here. This data can be read by humans themselves, and if the machine is set to parse this data it can provide us with runtime association of data with the objects. In a much similar manner, we can share the data from one machine to another by serializing and deserializing the objects.

What is the need of JSON, then?

JSON comes into the frame back in 1996 or around that time. The purpose of XML and JSON is similar: Data-interchange among multiple devices, networks and applications. However, the language syntax is very much similar to what C, C++, Java and C# programmers have been using for their regular day “object-oriented programming“; pardon me C programmers. The language syntax is very similar to what JavaScript uses for the notation of objects. JSON provides a much compact format for the documents for storing the data. JSON data, as we will see in this guide, is much shorter as compared to XML and in many ways can be (and should be…) used on the network-based applications where each byte can be a bottleneck to your application’s performance and efficiency. I wanted to take this time to disgust XML, but I think your mind will consider my words as personal and bias views. So, first I will talk about JSON itself how it is structured and then I will talk about using the time to show the difference between JSON and XML and which one to prefer.

JSON format and specifications were shared publicly as per ECMA-404. The document guides the developers to find their APIs in such a way that they work as per the teachings of the standard. API developers, programmers, serialization/deserialization software developers can get much help from the documentation in understanding how to define their programs to parse and stringify the JSON content.

Structure of JSON

JSON is an open-standards document format for human-readable and machine-understandable serialization and deserialization of data. Simply, it is used for data-interchange. The benefit of JSON is that it has a very compact size as compared to XML documents of the same purpose and data. JSON stores the data in the form of key/value pairs. Another benefit of JSON is (just like XML) it is language-independent. You can work with JSON data in almost any programming language that can handle string objects. Almost every programming framework that I can think of most of the programming frameworks support JSON-based data-interchange. For example, ASP.NET Web API supports JSON format data transfer to-and-from the server.

The basic structure of JSON document is very much simple. Every document in JSON must have either an object at its root, or an array. Object and array can be empty there is no need for it to contain anything in it, but there must be an object or an array.

A sample, and simple JSON document can be the following one:

{ }

What that means, is something that I am going to get into a bit later. At the moment just understand that this is a blank JSON document about any object in the application. A JSON document can contain other data in the form of key/value pairs, that are of the following types:

- Object

- Array

- String; name or character.

- Integer; numeric

- Boolean; true or false

- Null.

Note that JSON doesn’t support all of the JavaScript keywords, such as you cannot use “undefined” in a valid JSON schema. Nothing is going to stop you from using it in your files, but remember that valid JSON schema should not include such values. One more thing to consider is that JSON is not executable file, although it shares the same object notation but it doesn’t allow you to create variables. If you try to add a few variables to it, it would generate errors. Now I would like to share a few concepts for the valid data types in JSON and what they are and how you should use them in your own JSON data files.

JSON data types

JSON data types are the valid values that can be used in JSON files. In this section I want to clarify the values that they can hold, how you can use them in your own project data-interchange formats.

JSON Object

At the root of the JSON document, there needs to be either a JSON object or a JSON array. In JavaScript and in many other programming languages an object is denoted by curly braces; “{ }”. JSON uses the same notation for denoting the objects in the JSON file. In JSON files, objects only contain the properties that they have. JSON can use other properties, other data types (including objects) as the value for their properties. How that works, I will explain that later in this course guide, but for now just consider that each of the runtime object is mapped to one JSON object in the data file.

In the case of an object, there are properties and their values that are enclosed inside the opening and closing curly braces. These are in the form of a key/value pair. These key value sets are separated using a colon, “:” and multiple sets are separated using a comma, “,”. An example of the JSON object is as follows:

{

"name": "Afzaal Ahmad Zeeshan",

"age": 20

}

Even the simplest of the C#, Java or C++ programmers would understand what type these properties are going to hold. It is clear that the “name” property is of “string” type and the “age” property is of integer type.

There are however a few things that you may need to consider at the moment:

- An object can contain any number of properties in it.

- An object can omit the properties; being an empty object.

- All the property names (keys) are string values. You cannot omit the quotation around the key name. It is required, otherwise JSON would claim that it was expecting a string but found undefined.

- Key/value sets are separated by a colon character, whereas multiple sets are separated using a comma. You cannot add trailing commas in the JSON format, some of the APIs may through error. For example, JSON.parse(‘[5, ]’); would return an error.

I will demonstrate the errors in a later section because at the moment I want to show you and teach you the basics of JSON. But, I will show you how these things work.

By now the type of the JSON object is clear to you. One thing to keep in mind is that JSON object is not only meant to be used as the root of the JSON document. It is also used as a value for the properties of the object, which allows the JSON document to contain the entire data in the form of relationship with objects; only difference being that the objects are anonymous.

JSON Arrays

Like JavaScript, JSON also supports storing the objects in a sequence, series or list; what-ever you would like to put it as. Arrays are not complex structures in JSON document. They are:

- Simple.

- Start with a square bracket, and end on the same.

- Can contain values in a sequence.

- Each value is separated by a comma (not a colon).

- Value can be of any type.

A sample JSON array would look something like this,

[ "Value 1", "Value 2" ]

You can store any type of value in the array, unlike other programming language such as C# or C++. The type of the values is not mandatory to be similar. That is something that pure C# or C++ programmers cannot fathom in the first look. But, guys, this is JavaScript. I used JSONLint service to verify this, later I will show you how it works in JavaScript.

Figure 2: JSONLint being used for verification of JSON validity.

In JavaScript, the type doesn’t need to be matched of the arrays. So, for example you can do the following thing and get away with it without anything complaining about type of the objects.

var arr = [ "Hello", 55, {} ];

So, JSON being a JavaScript-derived document format, follows the same convention. JSON leaves the idea of type casting to the programmer, because this is performed only in the context of strongly typed programming languages such as C++, C# etc. JavaScript, being weakly typed doesn’t care about the stuff at all. So, it can take any type in an array as long as it doesn’t violate other rules.

Upcoming data types are similar to what other programming languages have, such as strings, integers and boolean values. So, I will try to not-explain them as much as I have had explained these two.

JSON Strings

String typed values are the values which contain the character-type values. In many programming environments, strings are called, arrays of characters. In JavaScript, you can use either single quotes or double quotes to wrap the string values. But, in JSON specification you should always consider using double quotation. Using single quotation may work and should work in JavaScript environment but in the cases when you have to share the data over the network to a type-safe programming environment such as where C++ or C# may be used as the programming language. Then your single quotes may cause a runtime error when their parsers would try to read a string starting as a character.

In JSON, strings are similar and you have been watching strings in the previous code samples. A few common examples of string values in JSON are as following:

// Normal string

"Afzaal Ahmad Zeeshan"

// Same as above, but do not use in JSON

'Afzaal Ahmad Zeeshan'

// Escaping characters, outputs: "\"

"\"\\\""

// Unicode characters

"\u0123"

In the above example, you see that we can use many characters in our data. We can:

- Build normal strings such as C# or C++ strings.

- JSON standard forbids you from using the single quotation because you may be sending the data over to other platforms.

- Escaping the characters is required in strings. Otherwise, the string would result in undefined behavior. You can use the similar string escaping provided in other languages such as:

- \”

- \\

- \/

- \b

- \f

- \n

- \r

- \t

- \u-4-hex-numbers

- Unicode characters (as specified in the last element of above list) are also supported in JSON, you can specify the Unicode character code here.

One thing to consider is that your objects’ attributes (properties) are also keyed using a string value.

{

"key": 1234

}

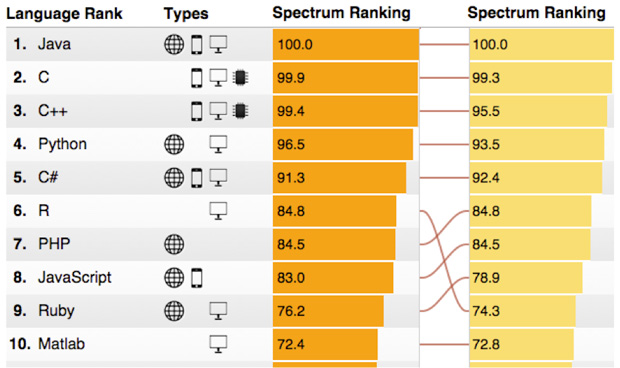

The value of a property can be anything, but the key must always be a string. The strings in JSON, JavaScript, C++, C# and Java are all alike and have the same value for each of the sentence. Unicode characters are supported and need not to be escaped. For original JSON website, I got this image,

Figure 3: JSON string structure.

Thus, the strings are similar in JSON and other frameworks (or languages).

JSON Integers

Just like strings, integers are also the literal values in the JSON document that are of numeric type. They are not wrapped in quotations, if they are then they are not numeric types, instead they are of string type.

A few examples of integer values are:

// Simple one

1234

// Fractional

12.34

// Exponential

12e34

You can combine fractional and exponential too. You can also append the sign of the number (negative – or positive +) with the number itself. In JSON these types can also be used as the values. If you take a look at the example above in a few sections above, you can see that I had used the age as an integer value for my property. A few things that you should consider while working with JSON data is:

- In exponential form, “e” and “E” are similar.

- It must never end with “e”.

- Number cannot be a NaN.

- Number encoding and variable size should be considered. JavaScript and other language may differ in encoding the variable size for the numberics and may cause an overflow.

JSON Boolean

Boolean values indicate the results of a conditional operation on an expression. Such as, “let him in, if he is adult”. JSON supports storing values in their literal boolean notation. They are similar to what other programming languages have in common. They must also not be wrapped in quotation as they are literals. If a boolean value is wrapped in quotation then it is not a boolean value but instead it is a string value.

// Represents a true result

true

// Represents a false result

false

These must be written as they are because JavaScript is case-sensitive and so is JSON. You can use these values to denote the cases where other types cannot make much sense compared to what this can make, sense. For example, you can specify the gender of the object.

{

"name": "Afzaal Ahmad Zeeshan",

"age": 20,

"gender": true

}

I didn’t use “male”, instead I used “true”. On the other side I can then translate this condition to another type such as,

if(obj.gender) {

// Male

} else {

// Female

}

This way, other than strings and numeric values we can store boolean values. These would help us structure the document in a much simpler, cleaner and programmer-readable format.

JSON null

C based programmers understand what a null is. I have been programming in programming languages such as, C, C++, C#, Java, JavaScript and I have used this keywords where the objects do not exist. There are other programming languages such as Haskell, where this doesn’t make much sense. But, since we are just talking about JavaScript and languages like it, we know what null is. Null just represents when an object doesn’t exist in the memory. In JavaScript, this means the “intentional absence” of the object. So in JavaScript environment a null object does exist but has no value. In other languages it is the other way around; it doesn’t even exist.

It just has a single notation,

null

It is a keyword and must be typed as it is. Otherwise, JavaScript-like error would pop up, “undefined“. This can help you in sending the data which doesn’t exist currently. For example, in C# we can use this to pass the values from database who are nullable. There are nullable types in C#, which we can map to this one. For example, the following JSON document would be mapped to the following C# object:

{

"name": "Afzaal Ahmad Zeeshan",

"age": null

}

C# object structure (class)

class Person {

public string Name { get; set; }

public int? Age { get; set; } // Nullable integer.

}

And in the database if the field is set to be nullable, we can set it to that database record. This way, JSON can be used to notably denote the runtime objects in a plain-string-text format for data-interchange.

Examples of use

I don’t want to stretch this one any longer. JSON has been widely accepted by many companies even Microsoft. Microsoft was using the web.config file in XML format for configuration purposes of the application. However, since a while they have upgraded their systems to parse and use the settings provided in a much JSON way. JSON is very simpler and easier to handle.

Microsoft started to use JSON format to handle the settings for their Visual Studio Code product. JSON allows extension of the settings, which helps users to add their own modifications and settings to the product.

I have been publishing many articles about JSON data sources and I think, if I started to use JSON then it was a sign of success of JSON over XML. 🙂

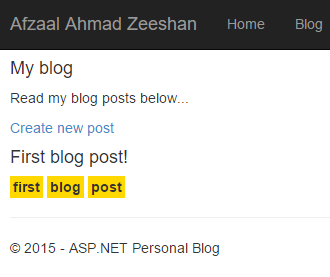

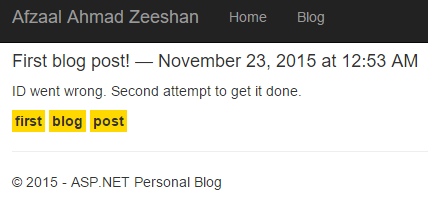

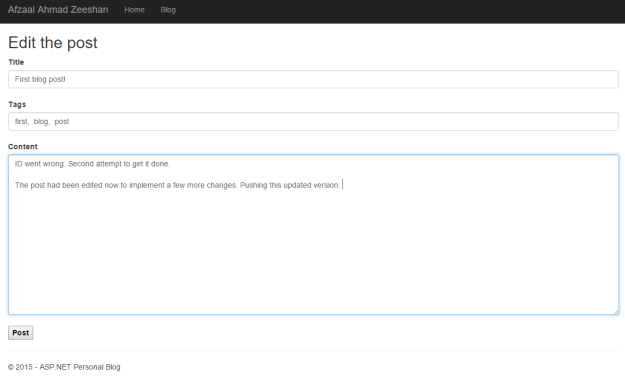

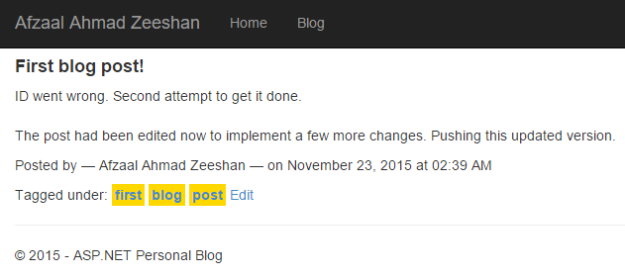

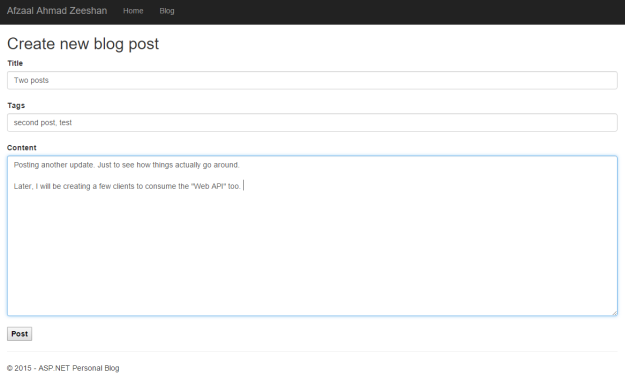

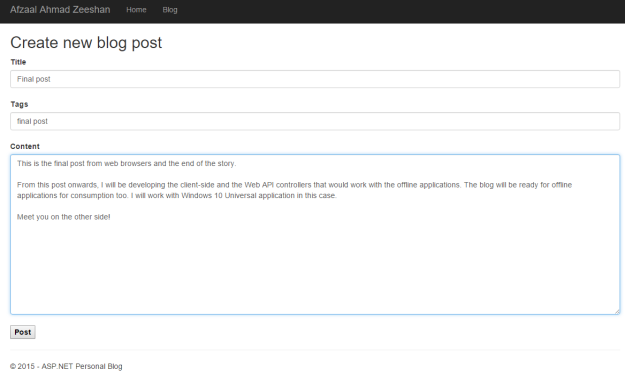

- Creating a “customizable” personal blog using ASP.NET

- Understanding ASP.NET MVC using real world example, for beginners and intermediate

There are many other uses of JSON and my personal recommendation is with JSON if you can use JSON over XML. However, if you are interested in XML or are supposed to use XML such as when using SOAP protocol. Otherwise, consider using JSON.

Errors in JSON

No, these are not errors in the JSON itself, but a few errors that you may make while writing the JSON documents yourself. This is why, always consider using a library.

Trailing commas

As already mentioned above, the trailing commas in the JSON objects or arrays cause a problem. The parser may think that there is another property to come but unexpectedly hits a terminating square or curly bracket. For example the following are invalid JSON documents:

{ "k": "v", } // Ending at a comma

[ 123, "", ] // Ending at a comma

These can be overcame if you:

- First of all, write your own parsers.

- Make them intelligent enough to know that if there is no property, they can ignore adding a property, simply.

- If there is no more value in the array after the comma, it means the array ended at the previous token.

However, present parsers are not guaranteed to be intelligent enough so my recommendation is to ignore this.

Ending with an “e” or “E”

If you end your numbers in exponential form with a e, you are likely to get an error of undefined. You know what that means, don’t you? It is always better to end it is a proper format following the specification.

Undefined

Each property name must be a string token. In JavaScript you can do the both of the following:

var obj = { "name": "Afzaal Ahmad Zeeshan" };

// OR

var obj = { name: "Afzaal Ahmad Zeeshan" };

But in JSON you are required to follow the string-based-key-names method of creating and defining the object properties. In JSON, if you do the following you will get error,

{

name: "Afzaal Ahmad Zeeshan"

}

Because, name is undefined. Parser may consider it to be a variable somewhere but JSON documents cannot be executed so there is no purpose of JavaScript variables to be there. Some libraries may render the results for your documents even if you leave your property names un-stringed. But, don’t consider everything to be server always follow the specifications.

C# side of article

To this point I hope that JSON is as simple as 1… 2… 3. for you! If not, please let me know so that I can make it even more clear. But, in this section I want to talk about using JSON in your C# projects. In this section I am going to talk about the libraries that are available in C#, or more specifically I am going to talk about JSON.NET library. I will use this library to explain how JSON can be used to save the state of the objects. How you can create a JSON file, how you can parse it to create objects on runtime and how serialization and deserialization works.

This section will be a real world section covering the methods and practices that you can use to use JSON in your own applications. I will cover most of the C# programming part in this section so that you can understand and learn how to program JSON in your .NET environment.

JSON.NET library

I am specifically just going to walk you through JSON.NET library. JSON.NET is a widely used JSON parser for .NET framework. I have been using this library for a very long time and I personally this is one of the best APIs out there for JSON parsing.

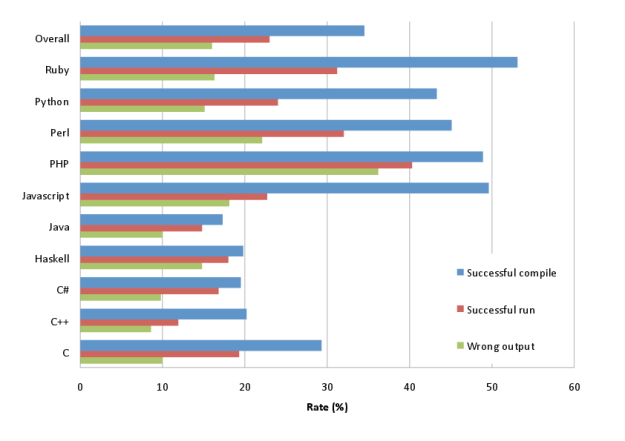

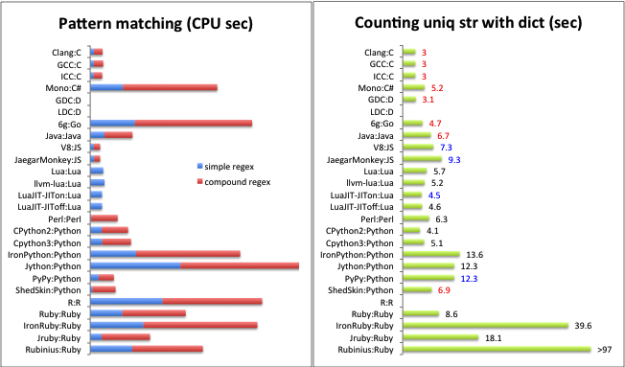

Figure 4: Json.NET comparison with other popular JSON serializers.

I will use the objects provided in this library just to demonstrate how you can parse JSON files to runtime objects, and how you can create JSON strings (serialize the objects) using the objects provided to the serializer. By the end of this post you will be able to create your own JSON-oriented-and-backed applications in .NET framework (or any framework that supports C# programming).

Creating the object for this guide

In C#, we create classes to work around with real-world objects. We can then use this library to convert the runtime structure of that object to a storable JSON document that can be used to:

- Send over the network.

- Save on the disk.

- Save for loading the object in that state later.

Many other uses. The counterpart of this process is the deserialization of the objects in runtime structures from their JSON notation. First of all, I will create a sample class that we are going to use to convert to JSON format and from the JSON format.

class Person

{

public int ID { get; set; }

public string Name { get; set; }

public bool Gender { get; set; }

public DateTime DateOfBirth { get; set; }

}

This object has the following diagram.

Figure 5: Person class diagram.

Frankly speaking, this object can be created in C++, it can be created in Java and it can be created in JavaScript too. If we use JSON for serializing this object, or the number of objects that can be transmitted over the network to the peers on the networks. Since we have stringified the data in JSON notation, we now can parse the string data in other languages too. Every other programming language has a JSON parser library.

We can redefine the structures in other programming languages and then use a JSON parser library to convert this string over the network in those machines too. I am going to cover C# part of programming only.

A few things that you might want to know here before we move forward. I have used the datatype of “DateTime” because I wanted to show how derived types are converted to when they are being serialized to JSON notation. So, let’s begin the C# programming to understand the relative stuff in their C# counterparts.

Serializing the objects

In programming, serialization is the process of converting the runtime objects to a plain-text string format. In other words, it converts the object structure from memory to a format that can be stored on the disk. Serialization just translates the state of the objects, which is that it just translates the properties of the object to the format that can be used to store them for later use. You can think of it as synchronization process, where you store the current state of the objects on the disk so that you can load the data later and start your work from the state where you left it instead of starting from scratch again.

Since we are talking about JSON here, we are interested in the conversion of objects in their JSON notation for storage (serialization) and then in the upcoming section to convert the JSON document into runtime objects and data structures (deserialization). Simply, we are going to see how to convert the JSON into objects and vice versa.

To serialize the object, you need an object that holds the data in itself. The object would have a state in the application. We then call the library functions to serialize the objects in their JSON notation. Like this,

// Create the person

Person myself = new Person {

ID = 123,

Name = "Afzaal Ahmad Zeeshan",

Gender = true,

DateOfBirth = new DateTime(1995, 08, 29)

};

// Serialize it.

string serializedJson = JsonConvert.SerializeObject(myself);

// Print on the screen.

Console.WriteLine(serializedJson);

The function is called to serialize the object which (as clearly seen) return a string object. This object holds the JSON notation for the object. The output returned was like this, (yes, I formatted the JSON document for readability)

{

"ID": 123,

"Name": "Afzaal Ahmad Zeeshan",

"Gender": true,

"DateOfBirth": "1995-08-29T00:00:00"

}

We create store the data in a file, send it on the network or do what we have to. I am not going to cover that part because I think (and believe) that this is totally relative to your application design and how it is expected to work. Now, we need to understand how it happened.

The ID, Name and Gender are the types that you have already seen. However the last field was actually of type DateTime which got translated to string type in JSON. Why? The answer is simple, JSON doesn’t provide a native DateTime object to store the date and time values. Instead, they use string notation to store the date value. The string value is very much self-explanatory as to what the year is, what month and what value date has. The time part is also clearly visible.

One thing to note here is that C# and JSON can agree on using the “null” value for objects too. Thus if the name is set to a null value, JSON can support the same value of null in its own document format. In the next section, we will study how JSON is mapped to the objects.

Deserializing the JSON

In data-interchange, this is the counterpart of the serialization process. In this process, we take the JSON document and parse it the runtime object (collection). There are many libraries available that can be used for parsing purposes however I am going to talk about the same one that I had been using to demonstrate the usage in C# programming environment. The method is very simple in this case too, However, I am going to use a JSON formatted string variable to use to parse it to the object.

Note: You can use values from files, networks, web APIs and other resources from where you can get the JSON formatted document. Just for the sake of simplicity I am going to use a string variable to hold the values.

The sample JSON data can be like the following,

string data = "{\"ID\": 123,\"Name\":

\"Afzaal Ahmad Zeeshan\",\"Gender\":

true,\"DateOfBirth\": \"1995-08-29T00:00:00\"}";

I used the following code to deserialize the JSON document.

// Serialize it.

Person obj = JsonConvert.DeserializeObject<Person>(data);

// Print on the screen.

Console.WriteLine(obj.ID);

Now notice one thing here. This “DeserializeObject” function has two versions.

- A plain non-generic version.

- A generic version.

If you use the non-generic version, then in C# you are going to use the dynamic object creation in C# and then we will be using the properties. For example, like the code below:

dynamic obj = JsonConvert.DeserializeObject(data);

Console.WriteLine(obj.ID);

Using that dynamic keyword would allow you to bypass the compile time check and allow you to get the “ID” property at a later time. This can be handy when you don’t want to create a class and deserialize against.

However, the version that I am using at the moment is different. It uses a type-parameter that is passed as a generic parameter; angled brackets, and Json.NET deserializes the JSON document and fills up our objects. We can also have array of objects, this way instead of “convincing” the program to iterate over the collection. We can first of all have the JSON parsed as an array of objects and so on. This would help us shorten the code and to check the stuff at all.

var listOfObjects = JsonConvert.DeserializeObject<List<Person>>(data);

Notice that we are passing a “List<>” object. Library would convert the stuff into the list of the objects and we can then use the collection for iterative purposes.

Some errors

There are a few errors that I would like to raise here, which might help you in understanding how JSON works. I will update this section later as I collect more data on this topic.

Type mismatch

If you try to deserialize the JSON of one type (such as, an object) into a type where it cannot be converted to (such as, an array). Library will raise an error. So do the following:

- Either make sure which type to convert to. Or

- Do not convert to a generically passed type, instead use dynamic keyword to deserialize the object and then check its type later on runtime. That still doesn’t guarantee it to work.

My recommendations

Finally, I want to give you a few of my own personal experience tips. In my experience I started to work with data on a database system; SQL Server CE I remember. But later I had to create applications which required a bit complex structures and were not going to last another weak. So in such conditions, using a database table was not a good idea. Instead, I had to use a sample and temporary data source. During those days, XML was a popular framework as per my peers. JSON was something that most didn’t understand. So, in my own humble opinion, you should go with JSON, where:

- Data needs to be shared cross-platform, cross-browser and cross-server. Databases may be helpful, but even they need serialization and stuff like that.

- You just need to test the stuff. You can target your Model to the JSON parsers and then in release mode change them to the actual data sources.

That is not it, the size of JSON is “amazingly” compact as compared to XML. For example, have a look here:

// JSON

{

"name": "Afzaal Ahmad Zeeshan",

"age": 20,

"emptyObj": { }

}

// XML

<?xml version="1.0" encoding="UTF-8" ?>

<name>Afzaal Ahmad Zeeshan</name>

<age>20</age>

<emptyObj />

Even in the cases where JSON has nothing in it, XML is bound to have that declaration line. Then other problems come around, such as sending an array, or sending out a native type object. In JSON you can do this,

true

In XML, you don’t have any “true” type. So, you are bound to send something extra. Which can cause to be a bottleneck in the networking transmission. I have been writing many articles, blogs and guides and most of them are based on JSON if not on SQL Server.

Figure 2: Server Manager application in Windows Server.

Figure 2: Server Manager application in Windows Server.